I’ve had a chance now to read the letter that vice-chair Kris Kobach has sent to the states, requesting that they send the Pence Commission copies of their publicly available voter files. My initial reactions fall into two buckets, the small and the expansive.

I want to make clear that there is no intrinsic problem with matching voting lists against other lists and reporting the results. In fact, valuable insights can emerge from linking voter records. I don’t know a better way to advance knowledge and practice than to conduct research, report the results, and then hash out what they mean.

But here’s the caveat. As a social scientist who has conducted voter roll matching both for scientific research and for litigation, I know how hard it is to do this right. For example, the well-known “birthday problem” makes it likely that two different people will be mistakenly matched to one another. Few people have the expertise to handle these complexities correctly. Just as litigation is rarely the best vehicle to advance the science of a field, I worry about developing matching routines on the fly in the context of a commission that is controversial.

Now on to the letter.

I am well aware that many people view with skepticism the appointment of the Pence Commission. I have nothing to add to the partisan fight over the commission’s appointment and work. Instead, my reaction is from the perspective of someone who believes that careful empirical research is the best path to improving elections in America.

Two small-bore details jumped out at me when I read the letter.

First, the letters were apparently addressed to all the Secretaries of State, even though the chief election officer (CEO) is not always the Secretary. (Here’s the link to the list of CEOs in each state.) Certainly support staff in SOS offices know how to forward e-mail, but small misfires such as this suggest a lack of care in the process of making the request for the voter files.

Second, the letters ask for the “publicly-available voter roll data,” including “dates of birth, political party …, last four digits of social security number if available…” The terms “publicly-available” and “social security number” don’t belong together in the same sentence. Furthermore, if the goal is to use these lists to do matching with other lists, there is no reason to specify political party.

Again, small details are telling.

But the request raises much bigger issues. The letter reflects a naive understanding of how to go about voter list matching. The letter isn’t a whole lot different from the requests I’ve seen or heard about over the years from graduate students and researchers just getting into the field. The letter seems to suggest that each state’s data set is just sitting in a computer waiting to be dragged from a folder onto a thumb drive, or, in this case, uploaded to an FTP site. Piece of cake.

Not so — and in so many ways. Here are just a few

- Many states don’t allow the sharing of voter files with anyone other than candidates or in-state political parties. (Michael McDonald has a nice summary of some of these issues as of 2015 here. Paul Gronke wrote about this in Pew’s Data for Democracy in 2008.) I suspect that all sorts of groups are preparing their lawsuits right now seeking to enjoin states with such restrictions from sharing these files with the Commission. If a state with such a law ends up providing the list anyway, the lawsuits will now come back to the states asking why other groups can’t get access to these lists, contrary to law.

- Some states charge lots of money for these lists. In 2015, McDonald estimated it would cost over $120,000 to pay the fees to acquire these lists, if it were allowed. Will the Commission pay? If a state with high fees provides the list for free, how soon will the lawsuit be coming to demand that all requests for the voter files be granted gratis?

- In many cases, the voter files are not available as a single file in a single place. Who on the Commission’s staff is going to call each of the 351 city and town clerks in Massachusetts, asking them each for a copy of their municipality’s voter list?

- The voter files are not in standard formats. Each of the 51 files, if they were all assembled, will need to be cleaned and prepared, and most of them will take hours, if not days, to prepare for analysis.

- Matching between the data sets — including matching with non-voter lists such as immigration lists — is hard. It will be especially hard to match with immigration lists because I suspect that few states will share actual dates of birth and none will share the last four digits of the social security number. This will be on top of the difficulties that arise because of typos and inconsistent data entry standards. Therefore, any matches that are done will be suspect from the start. This is already the case when states — which are in full control of their voter lists — do their own cross-list matching. You want to match on the actual non-public voter files, and they will not be made available to the Commission.

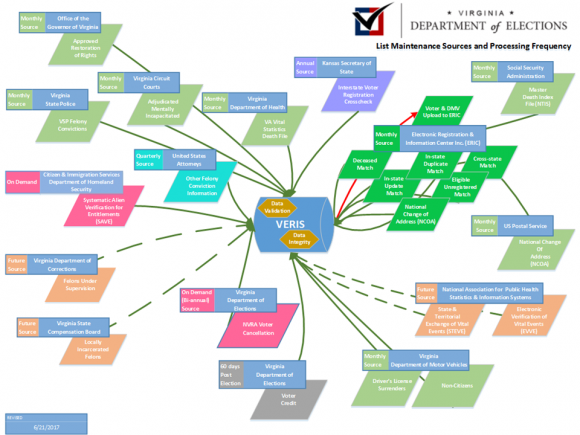

- Federalism and state control. In my seventeen years of working in this field, I have heard one refrain more than any other, particularly from Secretaries of State: No National Voter File. One of the early obstacles in creating the Electronic Registration Information Center (ERIC) was the worry that it would create a national file. ERIC has been designed to make that impossible. How does the request that each state deposit its voter file on a military server relate to existing opposition on many fronts to creating a single national voter file?

- Privacy. Researchers working in this area are well aware that the information contained in voter files is highly sensitive. State laws and practices already reflect this sensitivity. So does federal law. There is no information in the letter about the privacy parameters associated with sharing the voter file. Indeed, there is the troubling sentence that says, “Please be aware that any documents that are submitted to the full Commission will also be made available to the public.” Does this apply to the voter files? Does this apply to the results of matching procedures?

For each of the many Voting Rights Act cases that have involved database matching, the cost to conduct those analyses has run well into the six figures. And those were relatively simple, because the data were better and the number of databases being matched was much smaller. The protocols were also carefully designed, because they were developed under the watchful eye of a court. If the Commission intends to conduct a comprehensive matching exercise, this will be a multi-million dollar, multi-month operation. Because the Commission has the full support of the White House, I am sure the resources will be brought together to conduct whatever matching the Commission wants conducted, but is this the best use of these resources for this goal?

I have alternative thoughts about how the resources could be better spent, but the details will have to wait until another day. To repeat what I said at the top of this post, I do think that voter list matching is important and valuable. It should be conducted by the states on a regular basis, with the results being made public, warts and all. As I wrote about last week, Virginia already provides a good example of a state doing intensive work, and I know they would like to do even more. (And, here is a link to the type of report Virginia regularly releases. Warning: it’s 10,673 pages long.)

But, for states to do this, we need to bring together all the stakeholders to help create the protocols that allow for accurate matching. My own grand plan is something like this:

- Every state should join ERIC, which has been shown to be very helpful in dealing with the most basic and common “list hygiene” problems.

- A group of state and local officials, academics, and federal government officials should get together and design agreed-upon protocols (which would combine automated and manual processes) for the auditing of state voter files by comparison with other state’s voter files, other state records, and federal government records. Part of this agreement should include a plan to grant states direct matching access to federal data sets that are currently off limits to bulk matching. This auditing standard should also account for other problems with voter files, such as typos.

- States themselves would then regularly conduct list audits using the protocols identified in the previous step and make the results of those audits public.

The existence of the Pence Commission is controversial already, and the day has now come that one of its most controversial activities has begun. My intention here is to sidestep the political controversy and suggest two other things have been under-appreciated. First, the Commission’s eyes may be bigger than its stomach. Acquiring voter files from every state and matching them — among themselves and with other databases — will be a quagmire. Second, public auditing of voter files based on database matching (and other procedures) is something that should be done more often and more publicly. Because we have entrusted states to manage the voter files — for better or worse — a state-directed initiative would seem a better strategy than a controversial, high-visibility activity of a temporary federal commission.